Top 11 Best Open Source Web Crawlers In 2022

Best and most popular open source web crawlers will be discussed in this article. Discover the free software packages, frameworks, and SDKs that can get your web crawling project off the ground quickly.

In 2020, there will be 40 zettabytes of data online. And since one zettabyte is equal to one billion terabytes, we have access to a substantial amount of data. Although businesses and organisations must use big data to gain market insights, an estimated 80% of this data is unstructured. But you need structured data since this data must be in a machine-readable format in order to be used efficiently.

The web itself is a fantastic source of data, in addition to internal statistics, research, and databases of businesses. Web scraping and web crawling are two terms used to describe the retrieval of internet data. What’s the distinction? Search engines frequently employ web crawlers to scan websites for links and pages before indiscriminately extracting their information. On the other hand, a web scraper uses a specific script that is frequently customised to a particular website and its relevant parts to extract information from websites. It works well for converting unstructured data into informational databases that are structured. Read our blog post on web crawling vs. web scraping to find out more about the differences between the two.

What is the definition of a web crawler?

Web crawlers assist you in collecting data from public websites, finding information, and indexing web pages. Additionally, crawlers examine the links between URLs on a website to determine the structure of how these pages are related to one another. This crawling makes it simpler for online tools like search engines to provide a condensed version of the website in the form of search results and aids in your ability to study the website from a wider perspective.

Open-source web crawlers: what are they?

When software or an API is open-source, its source code is freely accessible to everyone. The code can even be adjusted and improved to meet your requirements. The same is true for open source web crawlers, which you can download or use for free and modify according to your use case.

Crawling a website is that permitted?

Tools for automating large-scale data extraction include crawlers and scrapers. So, for instance, a crawler performs it for you rather than you having to manually copy a product list from an online store. Even if it is allowed, you must take care not to amass sensitive data, such as personal information or copyrighted material.

Top 11 Best open source web crawlers In 2022

Top 11 Best open source web crawlers are explained here.

Let’s examine some of the most well-liked open-source crawling programmes that are readily available online now that we understand what web crawlers are and what they are used for:

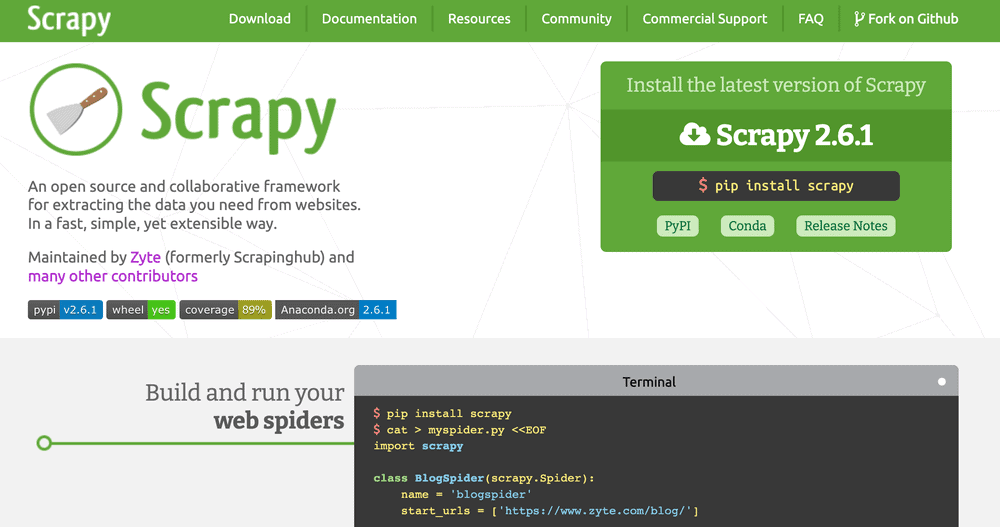

1. Scrapy

The most widely used web crawling software available online is also good for extensive web scraping. Additionally, because asynchronous requests are made in parallel rather than one at a time, crawling is extremely effective. This is another open source web crawlers. Also check PPC spy tools

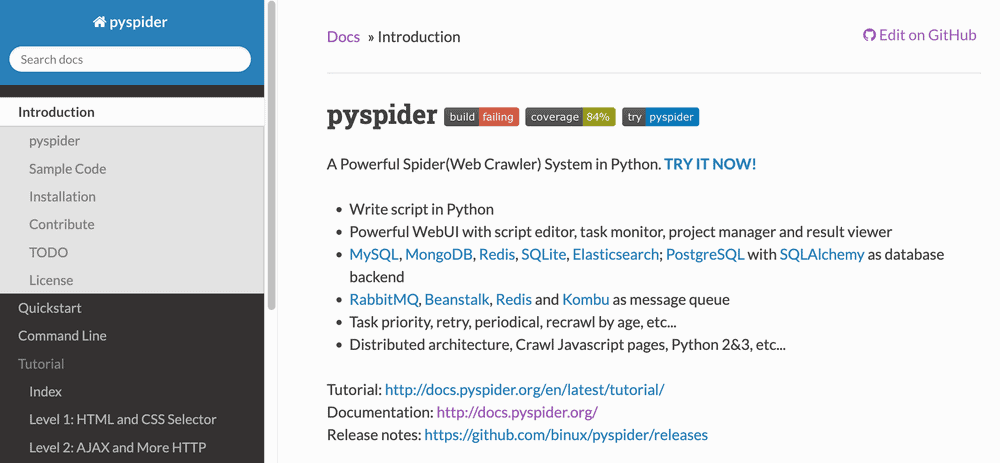

2. Pyspider

This is another open source web crawlers. A strong open-source Python spider (crawler) module. In contrast to other crawling applications, Pyspider offers a script editor, task monitor, project manager, and result display in addition to data extraction capability.

3. Webmagic

A framework for building scalable crawlers that will make crawler development easier. It covers every stage of a crawler’s life cycle, including downloading, URL management, and content extraction.

4. Node Crawler

A well-liked and effective package for Node.js webpage crawling. It is based on Cheerio and offers a wide range of customization options for how you crawl or scrape the web, including the ability to set a cap on the number of queries and the intervals between them. Also check backlink checker tools

5. Beautiful Soup

An open-source Python package called Beautiful Soup is used to parse HTML and XML texts. Extracting data from the web is significantly simpler once a parse tree has been created. Despite not being as quick as Scrapy, its key strengths are its simplicity of use and community support for when problems do occur. This is another open source web crawlers.

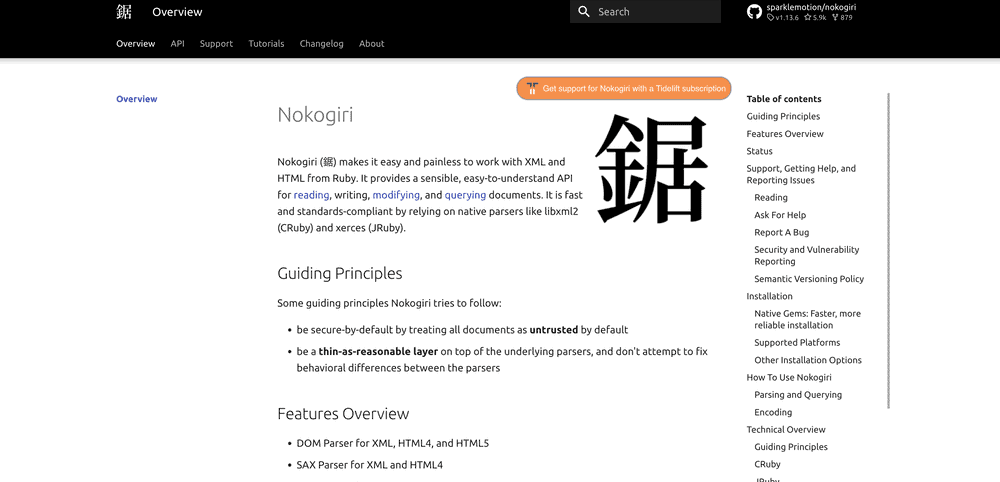

6. Nokogiri

Similar to Beautiful Soup, Nokogiri is excellent in parsing HTML and XML files through the use of Ruby, a programming language that is ideal for those new to web development. Nokogiri is dependent on native parsers like Java’s xerces and C’s Lixml2.

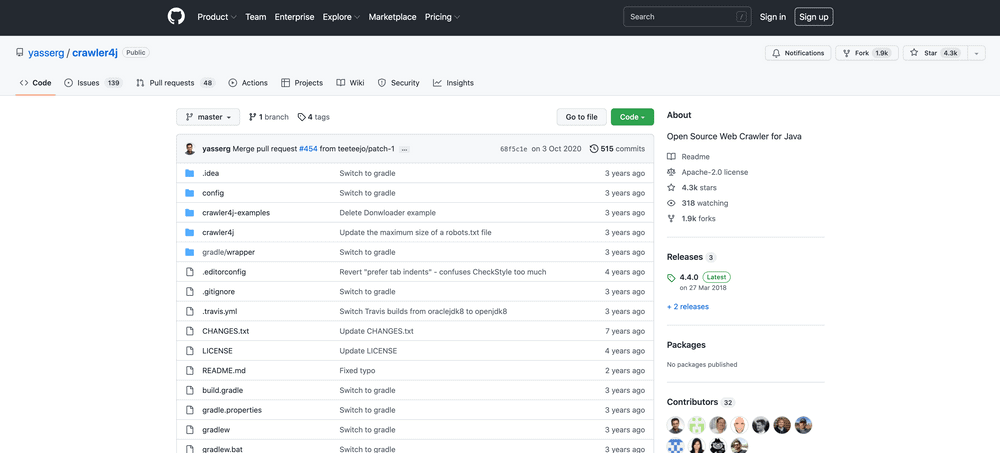

7. Crawler4j

This is another open source web crawlers. Crawl the web with this open-source Java web crawler’s user-friendly interface. The ability to construct a multi-threaded crawler is one of its benefits, although it suffers from excessive memory usage.

8. MechanicalSoup

A Python parsing library that draws its design from the Mechanize library and is based on the aforementioned BeautifulSoup. It works well for keeping track of forms, links, and redirects on a website. Also check tools services

9. Apify SDK

A JavaScript crawling and scraping library that is open-source and scalable. Apify SDK offers the Cheerio Crawler, Puppeteer Crawler, Playwright Crawler, or the Basic Crawler depending on your particular use. You can also deep crawl a website, rotate proxies, or turn off website fingerprinting security measures. This is another open source web crawlers.

10. Apache Nutch

This is another open source web crawlers. A web crawler that is open-source and flexible that is frequently used in data analysis. It can extract textual data from documents in formats like HTML, PDF, RSS, and ATOM and retrieve content via protocols like HTTPS, HTTP, or FTP.

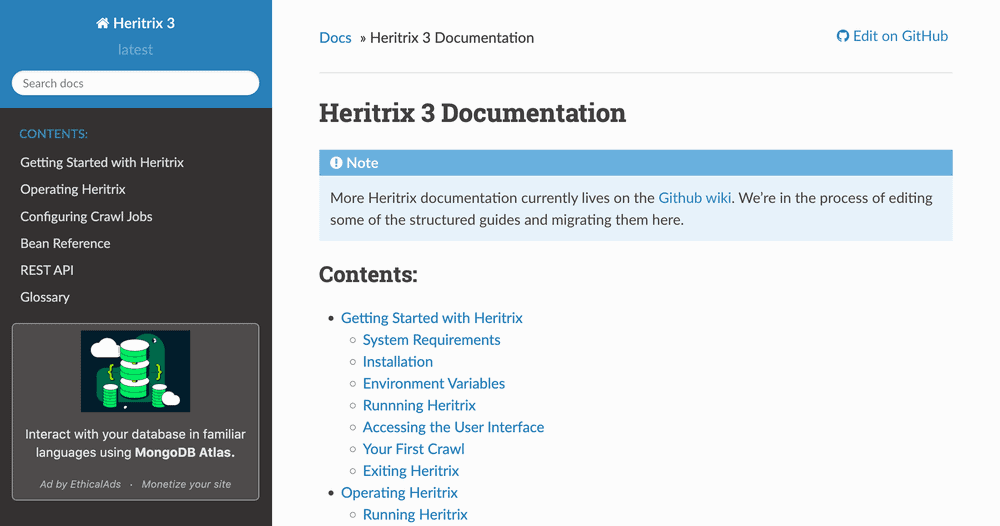

11. Heritrix

Heritrix is an open-source crawler created by the Internet Archive that is primarily used for web archiving. It gathers a lot of data, including domains, the precise host of a site, and URI patterns, but when dealing with more difficult tasks, it needs some fine tuning. This is another open source web crawlers.

Last, but not least….

We had just the Apify Crawler when we first launched Apify in 2015. Since then, we have developed Apify Store, which contains hundreds of ready-to-use scrapers and crawlers (we refer to them as actors) that can quickly gather data from a variety of websites. However, despite the availability of so many specialised scrapers, we still had to provide our users with a simple basic crawling and scraping tool. So, we came up with the Web Scraper. This actor uses JavaScript to retrieve data from the web and is based on the Node.js module Puppeteer. Simply enter the websites you want to crawl and the page content you want to retrieve using the instructions in our video tutorial.